Over the past few months, something subtle but significant has been happening across several of our digital services in the Department for Education (DfE). We’ve seen a rise in impressions coming from AI‑mediated search, at the same time as visits to our actual pages have flattened or dipped. More people are now receiving answers through Google’s AI Overviews, Microsoft Copilot and other emerging assistants, without ever visiting the services we’ve designed for them. At first, this felt like progress; faster access to information is not something to resist. But as we looked closer, a more complicated picture emerged.

The new reality: answers without journeys

Public services are not just providers of information. They are structured journeys intended to help people make sense of systems they may never have encountered before. These journeys carry context, safeguards, clarifications and next steps that ensure people understand not just a single answer, but the path around it. AI assistants, however, don’t really understand journeys; they understand questions. They extract the answer they think is most relevant, remove the supporting detail around it, and present it as a self‑contained response. The risk is not usually that they are wrong, though that can happen, but that they are incomplete in ways that matter.

What happens when users don’t know what they don’t know?

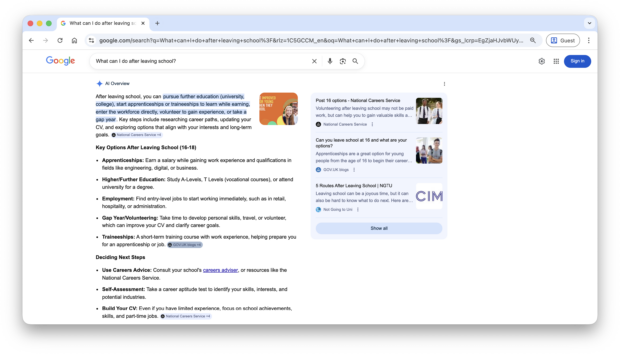

This incompleteness is especially challenging for the kinds of users many of our services are built to support. Not everyone arrives with a clear question. A teenager leaving school may not know to search for “apprenticeships”, “T Levels” or “vocational pathways”. They just know they need to figure out what to do next. Parents concerned about their child’s online safety often don’t know the terminology of the problems they’re worried about. Care‑experienced young people may not know that certain entitlements or support options even exist. A well‑designed service helps reveal possibilities, not just respond to questions. It helps people articulate what they need (or need to do), especially when they don’t yet have the language for it. AI tools, by contrast, only answer what they’re asked. They meet users where they already are, which can limit discovery and reinforce gaps in understanding.

Should we change the way we work or return to our foundations?

As a design team, we’ve been asking ourselves whether this moment requires us to rethink how we work, or whether it simply calls for a renewed commitment to the principles that have guided digital government for more than a decade. We keep coming back to the same idea: the fundamentals of user‑centred design still hold. If anything, they’re becoming more important. Clear, well‑structured content; journeys that reflect real user needs; collaboration with policy; plain English; transparency; consistency; these are still the foundations that make services trustworthy and safe.

What’s changing is that we now need to design with the expectation that much of what we publish will be read indirectly, atomised, summarised or reinterpreted by systems we don’t control. This means thinking differently about the resilience of the content we produce. If a paragraph on one of our pages is lifted out of context, does it still make sense on its own? Does it say something that’s safe and accurate even when separated from the journey it belongs to? Can it stand alone without misleading someone who might never see the full service? These are difficult questions, because government services are rarely simple enough for a single answer to be the whole story. Yet if AI systems are going to present fragments of our work in isolation, then we need to ensure those fragments are robust.

This also means working more closely with policy colleagues. Much of the nuance that could be lost in an AI‑generated summary is key policy: eligibility rules, safeguarding considerations, rights and entitlements that depend on specific conditions. If we want AI tools to represent this information responsibly, the underlying policy explanations we publish need to be concise, unambiguous and consistent across channels. Early collaboration between design and policy becomes not just useful, but essential.

One area we haven’t explored yet, though it’s increasingly on our minds is testing our services through AI systems themselves. Just as we test journeys with real users, we may soon need to test them with the AI models that interpret our content on behalf of users. This isn’t about validating the AI; it’s about understanding how faithfully our content is being surfaced, where it is being distorted, and what happens when someone relies on an AI answer instead of visiting a service. It’s early days, but it feels inevitable that this will become part of the design and assurance process for public services. We’re interested to see what patterns or guidance may come from GDS’ recent GOV.UK AI Studio work.

What makes this moment particularly important is that it touches the heart of what user‑centred design is for. Much of our work is built around supporting people who do not arrive with perfect knowledge of their needs, who do not know the terminology, who may not understand the system they are stepping into (because they shouldn't need to.) If AI‑mediated agents or answers become the dominant entry point, we need to be sure that people who lack confidence or familiarity are not disadvantaged further. We cannot assume that the most complete and supported experience will be the one users see.

The challenge ahead

This isn’t a challenge any single department can solve alone. The move towards AI‑mediated access is happening across the whole digital ecosystem, not just in pockets. The risks are shared, as are the opportunities. We need cross‑government conversation about what this means for safeguarding, clarity, accessibility, statutory accuracy, measurement, accountability and the way we structure information at source. We need to think about what patterns or conventions might help us create content that is “machine‑interpretable” without losing the human intent behind it. And we need to keep hold of the idea that design in government is not about answers alone; it’s about meaningful, safe and supportive journeys that help people understand their choices and needs.

The shift we are seeing is real, and it will shape the next decade of public services. But if we approach it thoughtfully grounded in our core principles, and open to new ways of understanding how users encounter our work then there is an opportunity here too. AI may change the pathways into government services, but with the right foundations, we can still ensure that what people receive is accurate, trustworthy and rooted in genuine user need. And if we share what we learn with one another, we can navigate this transition together.

If you’re seeing similar patterns in your own services, or asking the same kinds of questions, I’d be keen to hear from you in the comments below. This is a moment for collective learning, and the earlier we begin the conversation, the better prepared we’ll all be.

You can also follow updates from the GOV.UK AI Studio; and if you are a Civil Servant you join the #ai-for-designers channel on UK Government Digital Slack.

2 comments

Comment by anne-louise posted on

interesting read on a problem I have spent much time pondering!

Comment by Robin Hayden posted on

Might the dip in traffic demonstrate that there is a user need for information to be provided without the journeys GOV.UK often uses? Whilst making information available through a journey provides a more digestible way of providing complex information and context, it can appear as an obstacle if one is in a hurry and just want an answer. Perhaps if GOV.UK was improved to be better at providing bitesize answers without the journey, but with context to highlight there are other things users might need to consider, there wouldn't be so big a dip in traffic.